As mentioned, we had a keynote presentation at the Financial Services Club from Andrew Haldane, Executive Director responsible for Financial Stability with the Bank of England, last week.

The presentation was delivered under the Chatham House Rule, so I cannot say too much about what Andy covered, but there was a theme throughout that may surprise many of you.

The financial services markets are technology markets.

They have been technology driven markets since the earliest days of my work in computing – yes, before the punched card and the dinosaurs – and so you would think they would be the most technically efficient.

They are not.

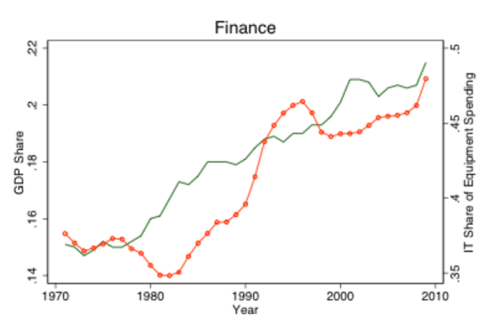

Andy picked on research by the American academic, Thomas Philippson of New York University, which shows that the markets are inefficient.

His findings prove that the unit costs for finance have risen over the past century.

In other words, the financial markets of Rockefeller and J.P.Morgan a century ago were more efficient at a unit cost level than the markets of today.

That is exceptional, and exceptionally disappointing.

How come?

From Thomas Philippon’s report:

“I find that the unit cost of intermediation has increased since the mid-1970s and is now significantly higher than it was at the turn of the twentieth century. In other words, the finance industry that sustained the expansion of railroads, steel and chemical industries, and later the electricity and automobile revolutions seems to have been more efficient than the current finance industry.”

He further finds “that this (annual) unit cost is around 2% and relatively stable over time. In other words, I estimate that it costs two cents per year to create and maintain one dollar of intermediated financial asset.”

The bottom-line of Philippon’s findings is that bankers’ compensation is increasing, contributing to a static unit cost, even though technology is automating:

“The income share grows from 2% to 6% from 1870 to 1930. It shrinks to less than 4% in 1950, grows slowly to 5% in 1980, and then increases rapidly to more than 8% in 2010. Surprisingly, the tremendous improvements in information technologies of the past 30 years have not led to a decrease in the average cost of intermediation, or at least not yet.”

In other words, income is going up whilst costs stay the same or, putting it simplistically, banks have taken all the gains from technology and redistributed them as payments through bonuses and increased salaries (or so Philippon implies).

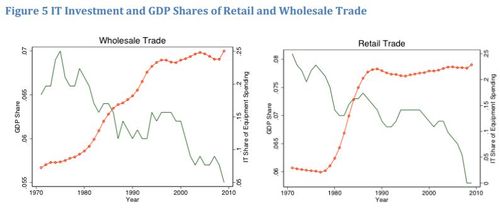

That is why the charts below show that general trade firms have radically pushed down costs through technological efficiencies whilst banking has not.

![]()

Shame.

The other piece of this is the fuelling effect that technology has had on complexity however.

I’ve written about this several times before, showing my favourite charts of Goldman Sachs and credit derivatives, but Andy made things a little clearer when he outlined the complexity of Asset-Backed Securities (ABS).

The original Residential Mortgage Backed Securities (RMBS) could be understood by reading about 200 pages of documentation;

Collateralised Debt Obligations (CDO) would require reading about 30,000 pages of information; whilst

CDO-squared, where Credit Default Swaps multiplied the risks, resulting in around 1.125 million pages of documentation required to be read to get close to tracking the global complexity of such instruments.

Put another way, in the first iteration of the Basel Accord there were seven risk metrics requiring seven calculations; by the time we get around to implementing Basel III, over 200,000 risk categories will require over 200 million calculations.

Between the automation of the system, the overpayment of the operators, the silo approach to finance and the complexity such silos create with inherent risk, there is a strong probability that the new Bank regulator will seek to ensure far more transparency through automation and integration to avoid arbitrage risks.

Or something like that anyway.

Chris M Skinner

Chris Skinner is best known as an independent commentator on the financial markets through his blog, TheFinanser.com, as author of the bestselling book Digital Bank, and Chair of the European networking forum the Financial Services Club. He has been voted one of the most influential people in banking by The Financial Brand (as well as one of the best blogs), a FinTech Titan (Next Bank), one of the Fintech Leaders you need to follow (City AM, Deluxe and Jax Finance), as well as one of the Top 40 most influential people in financial technology by the Wall Street Journal's Financial News. To learn more click here...